Offering

View HSO Pre-built AI Agents

Gartner predicts that over 40% of agentic AI projects will be cancelled by 2027 due to unclear ROI.

Most organizations have the investment, the demos, and the pilots, but not the strategy that connects any of it to a commercial result.

This article covers what a real agentic AI strategy looks like, the four phases it has to move through, and how to get from a first working agent to enterprise-wide impact.

What is an Agentic AI Strategy?

An agentic AI strategy is a business-led plan that defines which processes AI will run, on what data foundations, under what governance, and toward what measurable outcomes, all defined before any agent or platform is built.

Most organizations do not have one. They have agents. They have AI PoCs. They have experiments running across two or three business units with varying degrees of management attention. What they are missing is an agentic AI strategy that connects the activity to a commercial result.

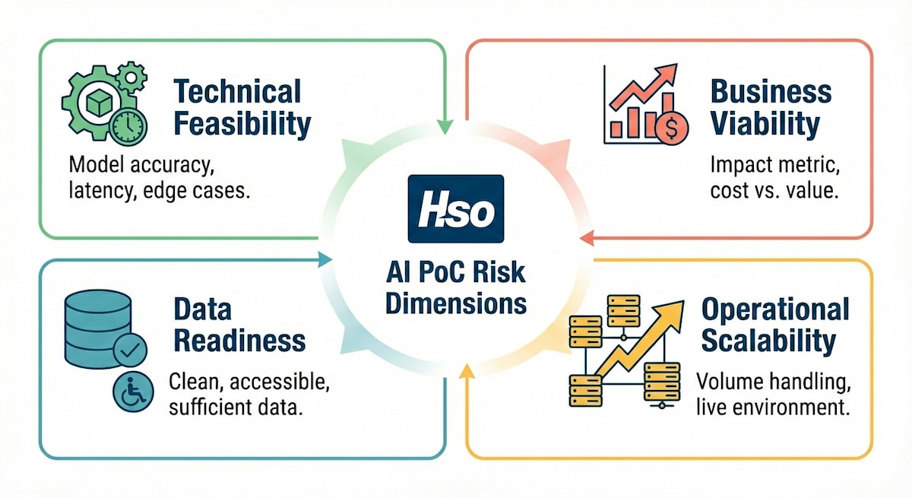

A proof of concept answers the question: can this agent work technically? A strategy answers a different one: what will it deliver commercially, and what has to be in place before it can?

Deloitte's 2025 enterprise survey found that 38% of organizations are piloting agentic AI solutions and only 11% are actively using agents in production.

Why Most Agentic AI Strategies Don't Deliver an ROI

The failure rate is not random. 94% of AI projects stall for many of the same reasons, and they are visible before a single agent is built.

McKinsey's November 2025 State of AI survey found that 88% of organizations use AI in at least one function and 62% are experimenting with agents. Fewer than 40% report any enterprise-level financial impact. Only around 10% have scaled agents in any single function.

The organizations contributing to those numbers are not making technology mistakes. They are making strategy mistakes, and the same five patterns appear consistently.

“Everybody right now is like a kid in a candy shop when it comes to AI. Where's the strategy? Where's the plan? If you don't have data to support a use case—and if the fundamentals are broken, everything else is broken as well.”

Pattern 1 - Wrong Agentic AI use case chosen: The process gets selected because it looks interesting or because someone in leadership saw a compelling demo. There are no defined success criteria (Like “cut overdue invoices by 15%.”), no break-even calculation, and no acceptance threshold. When the agent ships, there is nothing to measure it against.

Pattern 2 -Data not ready: The agent is deployed on fragmented, inconsistent, or low-quality data. The most capable model available cannot compensate for data that is not aligned to support the use case. Garbage in, garbage out has not stopped being true.

Pattern 3 - Process not mature enough to automate: A process that lives in someone's head, in the informal judgment calls experienced staff make without thinking, cannot be reliably replicated by an agent. Agents execute the logic they are given. If that logic is incomplete, ambiguous, or undocumented, the agent surfaces that ambiguity at scale.

Pattern 4 - Agent built alongside the workflow, not inside it: An agent that sits next to a process is optional. Employees can use it or ignore it. An agent embedded inside a process is how work actually happens. Most implementations that stall by week four were designed as an optional layer, not as a structural part of how the work gets done.

Pattern 5 - AI Change management treated as a follow-on: Adoption drops not because the agent fails technically but because it was never designed to become part of how people work. The tool launches, training happens once, and by week four most employees have returned to previous habits.

How to Build an Agentic AI Strategy: HSO’s Four Phases

HSO treats agentic AI strategy as a design-led discipline, not a technology implementation. The approach moves through four phases, each one building on the last, from initial assessment to full strategic transformation.

The four phases are:

Before any agent is built, an organization needs to know where it actually is, its AI maturity, its data readiness, and which processes are genuinely worth automating.

Organizations skip this phase because it feels slow. The pressure to show something running is real, and a readiness assessment does not produce a demo. But the cost of skipping it surfaces downstream, when agents miss ROI targets three months into a build that has already consumed significant budget.

“The right question is never 'how do we automate what we do?' It is 'what does success look like at the end of this process, and how do we design an agent that gets there?”

Data is what agents run on. Poor data quality, fragmented sources, and missing business context produce agents that confidently give wrong answers.

Organizations in regulated industries with mature data management and data governance consistently move faster and see better returns than those that treat data readiness as something to address after initial deployment.

Not every candidate process is worth automating. Five criteria separate use cases worth building from ones that will disappoint:

| Criterion | What to Assess | 🟩 Green Signal | 🟥 Red Signal |

|---|---|---|---|

| Volume | How often the process runs | Daily or multiple times daily | Weekly or less |

| Cost | Current manual execution cost | Significant and visible | Negligible or hard to quantify |

| Measurability | Can outcomes be tracked? | Clear metric exists | Vague productivity claim |

| Process maturity | Is the workflow documented? | Defined, governed steps | Lives in informal judgment |

| Embeddability | Can it go inside the workflow? | Process can be redesigned around the agent | Agent would sit alongside, not inside |

The fastest path to enterprise confidence is a single working agent with a clear ROI case, built inside the workflow, governed from day one, and validated with real users before scale.

Phase 2 is where assessment turns into action. The approach is deliberate: build one high-confidence use case, prove the commercial case, and use that result as the foundation for what comes next. The full enterprise roadmap can wait. The first agent cannot.

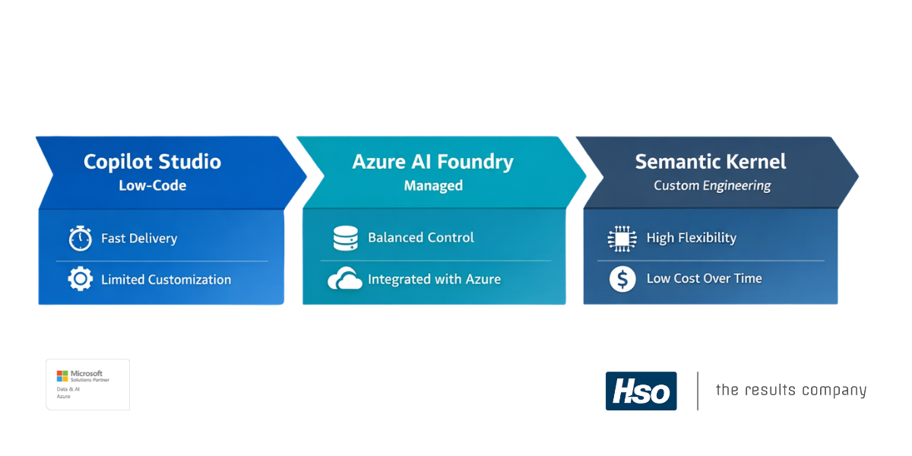

Building with production-tested accelerators. Microsoft Copilot Studio and Azure AI Foundry allow agents to be built, deployed, and monitored within existing Microsoft environments. HSO's pre-built agent library moves qualified use cases to production within days and weeks rather than months.

The Expense Entry Agent illustrates the approach to employee administration. Receipt photos submitted in Microsoft Teams are processed by the agent, which extracts the data, matches it to the correct expense categories and project codes, and auto-populates the relevant fields in Dynamics 365. Compliance improves as friction drops.

Three questions need answers before any agent goes live:

“Companies may not necessarily know how the agent will behave once it's actually out there. If an agent runs, who's accountable for it? Who's responsible for its behavior—and what if it makes a mistake?”

Once initial value is proven, the strategy shifts from deployment to management, treating agents as operational workloads, building adoption across the workforce, and establishing the AI governance structures needed to scale safely.

Phase 3 is where most organizations discover that their initial deployment assumptions do not hold at scale. A single well-governed agent is manageable. Ten agents across three business units, each with different data sources, different accountability owners, and different performance baselines, requires something more deliberate.

The pattern is consistent: week one sees high engagement as users explore the new tool. By week four, most have returned to previous habits. No one made a decision to stop.

Three practices that build genuine trust in an agent:

With 90% of organizations expecting a critical AI skills shortage by 2026, structured enablement and leadership modeling are required conditions, not afterthoughts. Adoption, not deployment, is the primary success metric.

Agents are operational workloads. They are not software that ships once and runs indefinitely without attention. HSO’s AI managed services look after the full agent lifecycle: usage and performance monitoring, periodic review and housekeeping, security and access validation, governance documentation, and knowledge source maintenance.

“One of the biggest issues in the world of AI is adoption and change management. The reality is that buying a tool is not the same as embedding it; an agent alongside the process becomes optional, but one embedded in the workflow becomes the integral part of the process.”

Phase 4 is not about deploying more agents. It is about reimagining how the business operates, with intelligent solutions running as the foundational layer of how work gets done.

The organizations that reach Phase 4 have moved past the question of whether agentic AI delivers value. They are asking a different one: what can the business do now that it could not do before?

The shift from human by default to human by exception is visible across functions in a Phase 4 organization.

The telemetry and feedback loops built in Phase 3 do more than monitor performance. They surface new AI automation opportunities and provide the data needed to refine existing agents. Each production agent generates insight that informs the next one. The AI strategy compounds rather than plateaus.

HSO's Approach to Agentic AI Strategy

HSO combines over 30 years of Microsoft platform expertise with a structured, business-led approach that maps directly to the four phases, from strategy assessment and use case discovery through to production-ready agents, lifecycle governance, and long-term management combined with optimization.

HSO's starting point is always the outcome question, not the technology recommendation.

Every engagement begins with an assessment that positions the organization and produces a prioritized roadmap before any build work begins. The target is a working agent with a defined commercial return, not an impressive demo.

HSO builds agentic AI exclusively on Microsoft's platform, ensuring agents integrate directly with the systems organizations already use.

Rather than creating a custom AI agent on every deployment, HSO maintains a library of industry specific and production-tested agents built for the most common enterprise processes.

Each agent is built on Copilot Studio and Dynamics 365, tested in real enterprise environments, and designed to be configured for specific business contexts. Organizations can see results in days rather than months.

Agentic AI refers to AI systems that can plan, decide, and take action across business processes with minimal human intervention, going beyond generating answers to executing multi-step workflows autonomously.

Unlike standard generative AI, which responds to prompts, agentic AI agents can read emails, process orders, match payments, update records, and trigger downstream actions without a person initiating each step.

For a full explanation of how agentic AI differs from AI agents, see Agentic AI vs AI agents.

The strategy and assessment work, maturity evaluation, use case prioritization, data readiness review, and roadmap, typically takes several weeks.

A first working agent can follow shortly after. Full enterprise scale takes longer, but the ROI case rarely requires it upfront. The fastest path is a clear strategy followed immediately by a high-confidence first deployment, not an extended planning phase that delays the first proof of value.

The biggest risk is a strategy failure, not a technology failure. Gartner predicts that over 40% of agentic AI projects will be cancelled by 2027, primarily because costs escalate and ROI cannot be demonstrated.

The most common causes are selecting the wrong use case, deploying on inadequate data foundations, building the agent alongside rather than inside the workflow, and treating governance as a post-deployment activity.

No. HSO's approach builds agents on top of existing Microsoft platform investments.

Copilot Studio, Azure AI Foundry, and Dynamics 365 provide the agent infrastructure.

Microsoft Fabric consolidates data without replacing source systems. The most effective agentic strategies extend what organizations already have rather than replacing it.

We, and third parties, use cookies on our website. We use cookies to keep statistics, to save your preferences, but also for marketing purposes (for example, tailoring advertisements). By clicking on 'Settings' you can read more about our cookies and adjust your preferences. By clicking 'Accept all', you agree to the use of all cookies as described in our privacy and cookie policy.

Purpose

This cookie is used to store your preferences regarding cookies. The history is stored in your local storage.

Cookies

Location of Processing

European Union

Technologies Used

Cookies

Expiration date

1 year

Why required?

Required web technologies and cookies make our website technically accessible to and usable for you. This applies to essential base functionalities such as navigation on the website, correct display in your internet browser or requesting your consent. Without these web technologies and cookies our website does not work.

Purpose

These cookies are stored to keep you logged into the website.

Cookies

Location of Processing

European Union

Technologies Used

Cookies

Expiration date

1 year

Why required?

Required web technologies and cookies make our website technically accessible to and usable for you. This applies to essential base functionalities such as navigation on the website, correct display in your internet browser or requesting your consent. Without these web technologies and cookies our website does not work.

Purpose

This cookie is used to submit forms to us in a safe way.

Cookies

Location of Processing

European Union

Technologies Used

Cookies

Expiration date

1 year

Why required?

Required web technologies and cookies make our website technically accessible to and usable for you. This applies to essential base functionalities such as navigation on the website, correct display in your internet browser or requesting your consent. Without these web technologies and cookies our website does not work.

Purpose

This service provided by Google is used to load specific tags (or trackers) based on your preferences and location.

Why required?

This web technology enables us to insert tags based on your preferences. It is required but adheres to your settings and will not load any tags if you do not consent to them.

Purpose

This cookie is used to store your preferences regarding language.

Cookies

Why required?

We use your browser language to determine which language to show on our website. When you change the default language, this cookie makes sure your language preference is persistent.

Purpose

This service is used to track anonymized analytics on the HSO.com application. We find it very important that your privacy is protected. Therefore, all data is collected and stored on servers owned by HSO with no third-party dependencies. This cookie helps us collect data from HSO.com so that we can improve the website. Examples of this are: it allows us to track engagement by page, measuring various events like scroll-depth, time on page and clicks.

Cookie

Purpose

This cookie enables HSO to run A/B tests across the HSO.com application. A/B testing (also called split testing) is comparing two versions of a web page to learn how we can improve your experience. All data is collected and stored on servers owned by HSO with no third-party dependencies.

Purpose

With your consent, this website will load Google Analytics to track behavior across the site.

Cookies

Purpose

With your consent, this website will load the Microsoft Clarity script, which helps us understand how people use the site. The cookies set by Clarity collect session-level data like how the visitor landed on the site, which pages they viewed, their language preference, and even their general location. This data powers Clarity’s features like heatmaps and session recordings, helping us see which parts of a page get attention and where users drop off. The goal isn’t to track individuals, but to understand patterns that can improve the user experience. Learn more about Microsoft Clarity cookies here.

Cookies

Technologies Used

Cookies

Purpose

With your consent, this website will load the Google Advertising tag which enables HSO to report user activity from HSO.com to Google. This enables HSO to track conversions and create remarketing lists based on user activity on HSO.com.

Possible cookies

Please refer to the below page for an updated view of all possible cookies that the Google Ads tag may set.

Cookie information for Google's ad products (safety.google)

Technologies Used

Cookies

Purpose

With your consent, we use IPGeoLocation to retrieve a country code based on your IP address. We use this service to be able to trigger the right web technologies for the right people.

Purpose

With your consent, we use Leadfeeder to identify companies by their IP-addresses. Leadfeeder automatically filters out all users visiting from residential IP addresses and ISPs. All visit data is aggregated on the company level.

Cookies

Purpose

With your consent, this website will load the LinkedIn Insights tag which enables us to see analytical data on website performance, allows us to build audiences, and use retargeting as an advertising technique. Learn more about LinkedIn cookies here.

Cookies

Purpose

With your consent, this website will load the Microsoft Advertising Universal Event Tracking tag which enables HSO to report user activity from HSO.com to Microsoft Advertising. HSO can then create conversion goals to specify which subset of user actions on the website qualify to be counted as conversions. Similarly, HSO can create remarketing lists based on user activity on HSO.com and Microsoft Advertising matches the list definitions with UET logged user activity to put users into those lists.

Cookies

Technologies Used

Cookies

Purpose

With your consent, this website will load the Microsoft Dynamics 365 Marketing tag which enables HSO to score leads based on your level of interaction with the website. The cookie contains no personal information, but does uniquely identify a specific browser on a specific machine. Learn more about Microsoft Dynamics 365 Marketing cookies here.

Cookies

Technologies Used

Cookies

Purpose

With your consent, we use Spotler to measures more extensive recurring website visits based on IP address and draw up a profile of a visitor.

Cookies

Purpose

With your consent, this website will show videos embedded from Vimeo.

Technologies Used

Cookies

Purpose

With your consent, this website will show videos embedded from Youtube.

Cookies

Technologies Used

Cookies

Purpose

With your consent, this website will load the Meta-pixel tag which enables us to see analytical data on website performance, allows us to build audiences, and use retargeting as an advertising technique through platforms owned by Meta, like Facebook and Instagram. Learn more about Facebook cookies here. You can adjust how ads work for you on Facebook here.

Cookies

Purpose

With your consent, we use LeadInfo to identify companies by their IP-addresses. LeadInfo automatically filters out all users visiting from residential IP addresses and ISPs. These cookies are not shared with third parties under any circumstances.

Cookies

Purpose

With your consent, we use TechTarget to identify companies by their IP address(es).

Cookies

Purpose

This enables HSO to personalize pages across the HSO.com application. Personalization helps us to tailor the website to your specific needs, aiming to improve your experience on HSO.com. All data is collected and stored on servers owned by HSO with no third-party dependencies.

Purpose

With your consent, we use ZoomInfo to identify companies by their IP addresses. The data collected helps us understand which companies are visiting our website, enabling us to target sales and marketing efforts more effectively.

Cookies

Purpose

With your consent, we use a tracking script by Apollo. The data collected helps us understand which companies are visiting our website, enabling us to target sales and marketing efforts more effectively.

Cookies