Machine Learning Consulting Services

Better predictions. Faster decisions. Measurable results.

Machine Learning That Works in the Real World

- 1

Top 1% Microsoft Partnership

HSO is a member of the Microsoft Inner Circle for 21 consecutive years, placing HSO in the top 1% of Microsoft partners globally. As an Azure Expert Managed Services Provider and Microsoft Fabric Featured Partner, HSO has direct access to Microsoft engineering teams and early-access programs. This relationship ensures HSO's machine learning solutions are built on the most current and capable Microsoft platform capabilities. - 2

A Proven Data-First Philosophy

Machine learning models are only as good as the data they are trained on. HSO's engagements always begin with the data estate, auditing existing data sources, eliminating silos, and building a clean, governed foundation before any model is developed. This approach prevents the most common cause of ML project failure: deploying models on poor-quality, fragmented, or incomplete data. - 3

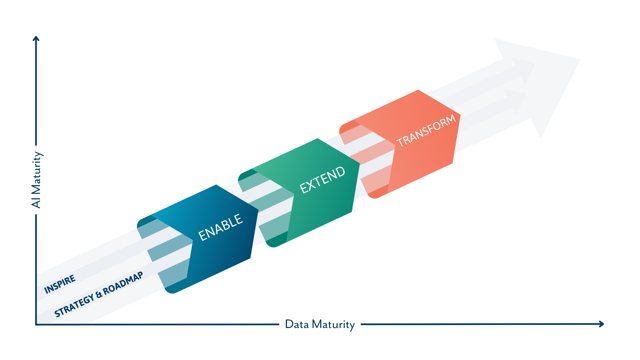

Think Big, Start Small, Act Fast

HSO's ML methodology is deliberately agile. Rather than committing to large, slow engagements, HSO identifies the single highest-impact use case and delivers a working machine learning MVP quickly, generating measurable ROI before scaling. This approach reduces the risk of pilot paralysis and ensures leadership can see the value of ML in weeks, not months. - 4

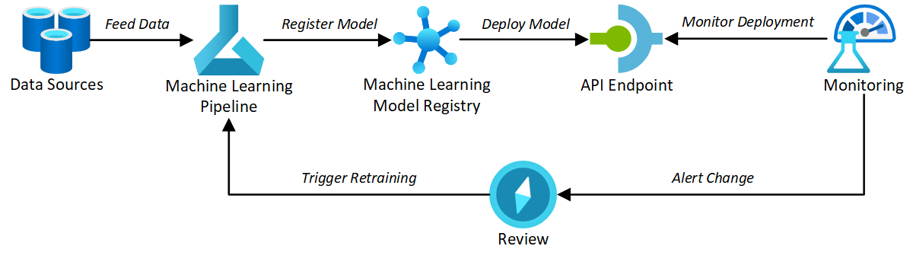

Mature MLOps Practices

Deploying a model is only the beginning. Without robust MLOps, models degrade, drift, and ultimately fail to deliver the business value they were built for. HSO's established MLOps practices govern the full model lifecycle, from training and deployment through to continuous monitoring and retraining, ensuring your ML investments remain accurate, reliable, and operationally sound over time.

Our Machine Learning Technology Stack

Azure Machine Learning

Azure Databricks

Azure Data Factory

Azure AI Foundry

Dynamics 365

Dataverse

Microsoft Power Platform

Our customers

Customers That Rely on Our Machine Learning Expertise

Common Machine Learning Challenges and How HSO Solves Them

Enterprise ML projects fail more often than they succeed—not because the technology does not work, but because the conditions for success are not established before development begins. HSO has navigated these challenges across hundreds of engagements, developing a delivery methodology that addresses the most common failure points.

Data That Is Not Ready for Machine Learning

Challenge: The most common reason ML projects fail is poor data quality. Organizations often discover that their data is fragmented across disconnected systems, inconsistently formatted, or missing critical historical records. Attempting to train models on this data produces unreliable outputs, and erodes trust in ML before the technology has had a fair chance.

Solution: HSO's data-first approach means the engagement begins with a thorough data audit. Using Microsoft Fabric and Azure Databricks, HSO consolidates data silos, cleanses and enriches training datasets, and establishes a governed data estate that provides ML models with the accurate, reliable inputs they need to perform.

Models That Degrade After Deployment

Challenge: A machine learning model that performs well in testing can rapidly deteriorate once it encounters live production data. As market conditions, customer behaviors, and business processes evolve, the statistical patterns a model was trained on become less representative—a phenomenon known as model drift. Without monitoring, these changes go undetected until the model is producing meaningfully wrong outputs.

Solution: HSO implements continuous model monitoring as a standard component of every ML deployment. Production models are tracked against real-time data using Azure Machine Learning's monitoring capabilities, with automated alerts triggering retraining pipelines when performance drops below defined thresholds. This ensures models stay accurate over time, not just at launch.

No Clear Business Case or ROI

Challenge: Machine learning projects are frequently stalled at the executive level because they lack a credible financial justification. Data science teams present technically impressive models, but leadership cannot connect them to measurable revenue impact, cost reduction, or productivity gain—and budgets are cut before any value is realized.

Solution: HSO's discovery process begins by identifying the specific business metric each ML model is designed to improve. Every engagement is scoped around a defined outcome—whether that is reducing unplanned downtime, improving forecast accuracy, or decreasing customer churn, and HSO's think big, start small, and act fast methodology, delivers measurable results quickly, giving leaders the evidence they need to justify further investment.

The Skills Gap in ML Delivery and Operations

Challenge: Building and maintaining enterprise-grade ML models requires a combination of data engineering, data science, and MLOps expertise that most organizations do not have in-house. Attempting to hire these skills is expensive and time-consuming. Attempting to upskill existing teams without structured support often produces inconsistent results.

Solution: HSO provides both the delivery capability and the knowledge transfer. HSO's MLOps consultants execute the technical work, while deliberately building internal understanding through training sessions, documentation, and collaborative delivery. Organizations emerge from engagements with a functioning ML capability and a team that can maintain and extend it independently.

Scaling Beyond a Single ML Pilot

Challenge: Many organizations successfully deliver one ML model, a demand forecast, a churn score, a maintenance predictor, and then struggle to scale. Running multiple isolated models without a common platform creates data duplication, inconsistent tooling, AI security gaps, and significant overhead for the data science team trying to manage it all.

Solution: HSO advocates for and delivers, centralized ML platforms on Azure Machine Learning, following the approach it implemented for Stolt-Nielsen. A unified platform provides a single, governed environment for all models: shared data pipelines, standardized deployment processes, consistent monitoring, and secure access controls. This architecture makes scaling from one model to many a structured, manageable process rather than a technical burden.

Governance, Ethics, and Compliance

Challenge: As ML models are used to drive consequential decisions, credit assessments, maintenance scheduling, customer targeting, hiring, the need for explainability, auditability, and compliance alignment becomes critical. Organizations face growing regulatory pressure to demonstrate that algorithmic decisions are fair, transparent, and traceable.

Solution: HSO embeds responsible AI principles into every ML engagement. Models are documented with clear lineage, input features are reviewed for potential bias, and decision outputs are logged for auditability. All custom AI solutions are built within the client's Microsoft Azure tenant, ensuring data never leaves the governed environment, and HSO aligns model governance with applicable AI compliance frameworks from the outset.