LLM Development Services

Move Beyond Off-the-Shelf AI

Most organizations start their AI journey with general-purpose models. They quickly hit a wall. Public LLMs lack the proprietary context, domain expertise, and security controls that enterprise operations demand. The result is hallucinations, data exposure risks, and AI that sounds impressive in a demo but fails in production.

HSO's custom LLM development services take you from experimentation to enterprise-grade AI. Built on Microsoft Azure AI Foundry and backed by HSO's data-first methodology, we deliver large language models that are grounded in your data, secured within your infrastructure, and designed to generate measurable business outcomes.

- 1

Data-First Methodology

HSO does not start with models. HSO starts with data. Before any LLM development begins, HSO assesses your data estate using Microsoft Fabric and Microsoft Purview to clean, structure, govern, and secure the information that will feed your models. This data-first approach is why HSO's LLM solutions deliver accurate, trustworthy outputs where others produce hallucinations and unreliable results.

- 2

Verified Microsoft AI Expertise

HSO holds the "Build AI Apps on Microsoft Azure" specialization, a credential that verifies technical expertise in deploying AI using Azure OpenAI, Azure Cognitive Services, and Azure Machine Learning. HSO is also a recognized Azure Expert MSP and holds all six Microsoft Cloud Partner Designations. - 3

Security and Compliance by Design

Every LLM solution HSO builds operates within Microsoft's enterprise security framework. RAG architectures enforce role-based access control so users only retrieve documents they are authorized to view. Data governance through Microsoft Purview ensures compliance with GDPR, the EU AI Act, and industry-specific regulations. Sensitive data remains external to model weights, supporting the "right to be forgotten" without costly retraining cycles. - 4

End-to-End Microsoft Ecosystem Expertise

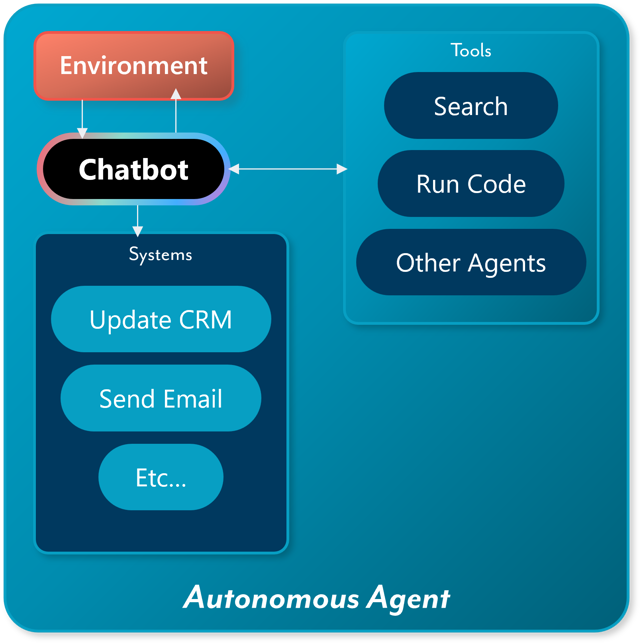

HSO delivers across Dynamics 365, Azure, Microsoft 365, and the Power Platform. This means LLM solutions are designed within the context of your full technology estate, not as isolated AI experiments. Whether connecting an LLM to ERP data, building agents that orchestrate across CRM and external systems, or integrating custom models into existing business applications, HSO delivers AI that fits the operational reality of your organization.

Our LLM Development Technology Stack

Azure AI Foundry

Azure AI Search

Microsoft Purview

Dynamics 365

Power Platform

Azure Machine Learning

Customers Driving Results with Custom AI & LLM Solutions

Organizations across industries trust HSO to build custom AI solutions that deliver measurable business outcomes.

Common LLM Development Challenges & Solutions

Every enterprise LLM initiative encounters structural, technical, and organizational obstacles. These are the challenges HSO addresses most frequently, and the approaches that overcome them.

"Our AI pilots never make it to production"

Challenge: This is the industry's most pervasive problem. Up to 95% of generative AI projects fail. The root cause is rarely the model itself, it is fragmented data, missing MLOps infrastructure, and a lack of production-grade engineering discipline.

Solution: HSO's methodology is built for production, not demos. Every engagement follows the full LLMOps lifecycle: define the business workflow first, prepare the data foundation, build with production architecture from day one, deploy with monitoring, and optimize continuously. HSO's acquisition of Aware Group brings nearly a decade of experience specifically focused on bridging the gap between AI prototype and production system.

"We don't know whether to use RAG or fine-tuning"

Challenge: Misunderstanding the purpose of RAG versus fine-tuning is a leading cause of project failure and budget overruns. Organizations that fine-tune models to inject factual knowledge face catastrophic forgetting, data privacy issues, and expensive retraining cycles. Those that rely solely on RAG for tone and format control miss its limitations.

Solution: HSO provides objective architectural guidance. RAG is deployed for dynamic, fact-based retrieval where data changes frequently and access control matters. Fine-tuning is applied for static behavioral patterns - domain-specific tone, strict output formatting, and embedded industry expertise. For mature use cases, HSO implements hybrid RAFT (Retrieval-Augmented Fine-Tuning) architectures that combine the strengths of both approaches.

"Our data isn't ready for LLM development"

Challenge: LLMs cannot create value on fragmented, ungoverned data. Many organizations have critical knowledge scattered across disconnected systems, documents in SharePoint, data in ERPs, insights buried in emails and spreadsheets. Without a unified, governed data estate, LLM outputs are unreliable and untrustworthy.

Solution: HSO's data-first methodology addresses this directly. Using Microsoft Fabric for data unification and Microsoft Purview for governance, HSO builds the data foundation before any model development begins. This includes data quality assessment, document ingestion pipeline design, vector database architecture, and access control configuration. The result is a data estate that makes LLM outputs accurate and compliant, not just functional.

"We're concerned about hallucinations and accuracy"

Challenge: LLMs are probabilistic systems that can generate confident-sounding but factually incorrect outputs. In enterprise contexts, financial analysis, legal review, customer-facing interactions, hallucinations create real business and regulatory risk. Traditional testing methods are insufficient because LLM outputs are unstructured and non-deterministic.

Solution: HSO architects RAG systems that ground every response in verified enterprise data, drastically reducing hallucination rates. Beyond architecture, HSO implements rigorous evaluation frameworks using automated metrics (ROUGE, BLEU, perplexity) and LLM-as-a-judge rubrics to continuously assess output quality. Guardrails including confidence scoring, citation requirements, and human-in-the-loop validation ensure that high-stakes outputs are verified before action is taken.

"We need this to be secure and compliant"

Challenge: Feeding proprietary data into LLMs introduces severe risks around data privacy, intellectual property exposure, and regulatory compliance. The EU AI Act imposes strict requirements on high-risk AI systems, and organizations must ensure that sensitive data can be controlled, audited, and deleted on demand.

Solution: HSO builds LLM solutions within Microsoft's enterprise security framework by default. RAG architectures keep sensitive data external to model weights, enabling instant deletion from vector indices without retraining, critical for GDPR and "right to be forgotten" compliance. Role-based access control ensures users only retrieve authorized data. Microsoft Purview provides data classification, sensitivity labeling, and audit trails. HSO embeds Microsoft's Responsible AI principles from day one - not as an afterthought.

From Language Models to Autonomous Agents

Frequently Asked Questions

Connect With Our LLM Development Experts

Related Resources