Why Choose HSO for AI Security Consulting

AI Security Consulting

AI Adoption Is Outpacing AI Security - And the Gap Is Growing

Most organizations have now adopted AI in at least one business function, but only 63% of generative AI initiatives are properly secured. That gap creates real risk, not from external attackers, but from the AI itself.

Sensitive records surface to employees who should not see them. Autonomous AI agents take actions no one explicitly authorized. Staff turn to public AI tools that process enterprise data outside any IT oversight.

HSO's AI security consulting closes that gap, helping organizations deploy Microsoft AI that is powerful, productive, and provably safe.

- 1

Microsoft Security Stack Expertise

Text: HSO deploys AI security controls using the tools organizations already trust: Microsoft Purview, Azure AI Content Safety, Microsoft Sentinel, Microsoft Defender, and Microsoft Entra ID. This is the same infrastructure underpinning Microsoft's own Secure Future Initiative - and HSO's consultants are certified to implement it across complex, regulated enterprise environments.

- 2

Security Built Into Every AI Engagement

HSO doesn't treat security as an afterthought. Every AI engagement - from an initial AI Briefing through to production deployment - applies security-by-design principles from the outset. That means AI systems are built to be safe at scale, not patched once problems emerge in production.

- 3

Responsible AI Aligned to Microsoft's Framework

HSO's AI security approach is grounded in Microsoft's six Responsible AI principles: fairness,reliability and safety, privacy and security, inclusiveness, transparency, andaccountability. This gives organizations a structured, auditable foundation for safe AI adoption that maps to international standards including the NIST AI RMF and ISO 42001.

- 4

Outcome-Focused AI Consulting

HSO delivers practical outcomes, not framework documents. Engagements are scoped to your organization's actual risk profile, starting with focused discovery and assessment, then moving to architecture, deployment, and ongoing assurance. For organizations taking their first step, HSO's AI Briefing provides an expert-led entry point to understand your current exposure and define a clear path forward.

Our AI Security Technology Stack

Microsoft Purview

Microsoft Defender

Azure AI Foundry

Microsoft Entra

Microsoft Sentinel

Our customers

Customers That Rely on Our AI Expertise

Common AI Security Challenges & Solutions

Most AI security risks don't arrive from the outside. They emerge from within, employees using unsanctioned tools, sensitive data surfacing in AI outputs, agents operating with unchecked access, or AI producing unreliable outputs that drive poor decisions. HSO addresses each of these systematically.

Employees Using Unsanctioned AI Tools

Challenge: 38% of employees have shared sensitive data with AI tools without permission. When staff use public AI chatbots to draft emails, summarize contracts, or analyze financial data, that information may be processed externally, stored, or used for model training. Blanket bans rarely solve the problem - they push usage further underground, creating blind spots rather than eliminating risk.

Solution: HSO uses Microsoft Defender and SIEM tooling to surface AI tools in use across your environment, including developer-built tools and browser extensions. Rather than blocking without replacing, HSO builds a path to sanctioned AI: deploying Microsoft 365 Copilot services or Azure OpenAI within your organization's own secure tenant as enterprise-approved alternatives that meet employee productivity needs without the data risk.

Sensitive Data Surfacing in AI Outputs

Challenge: Without proper access controls, AI systems like Microsoft 365 Copilot can return information employees were never meant to see. A poorly configured deployment can surface confidential HR records, financial data, or client files in response to ordinary queries - creating significant data exposure at scale, often without any visible warning.

Solution: HSO uses Microsoft Purview to classify data across your Microsoft 365 environment and apply sensitivity labels that AI systems are required to respect. Before any Copilot deployment, HSO audits and remediates SharePoint permissions to ensure the AI operates within the same access boundaries as your people. The result is an AI that is as trustworthy as the policies it enforces.

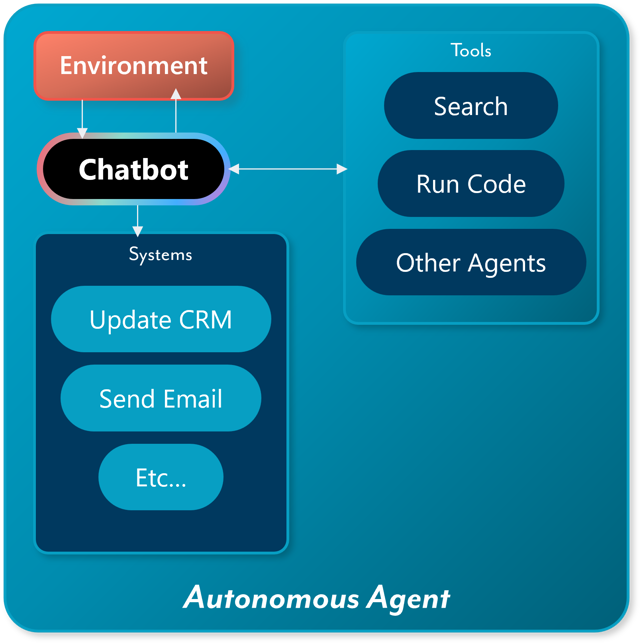

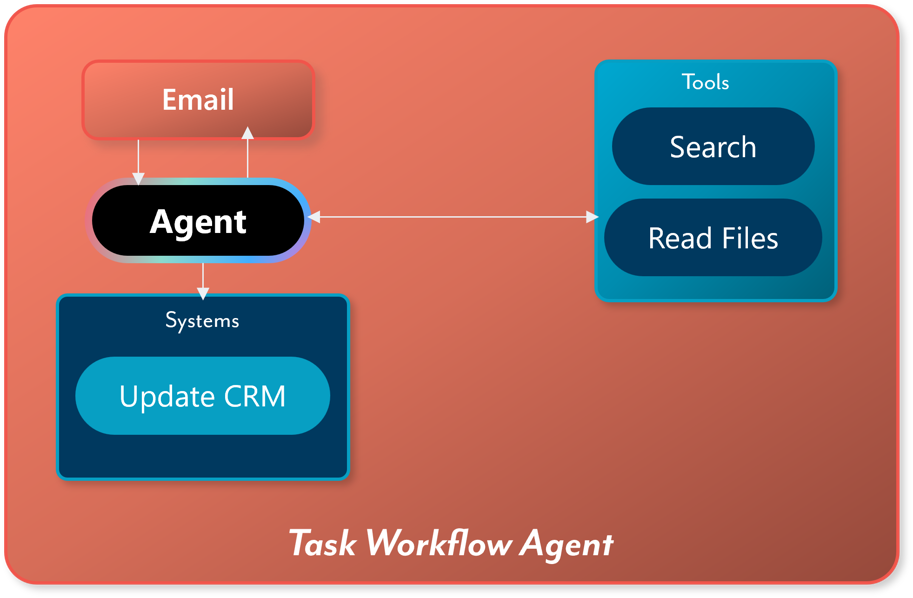

Autonomous Agents Acting Without Oversight

Challenge: Agentic AI moves from answering questions to taking actions - browsing the web, calling APIs, writing and executing code, sending emails. By 2028, 33% of enterprise software applications will include agentic AI, up from less than 1% in 2024. Without defined permission limits and human oversight built in, agents can take consequential actions no one explicitly authorized - and often no one is aware of until the damage is done.

Solution: HSO designs Microsoft AI solutions with least-privilege access, human-in-the-loop checkpoints for high-impact decisions, and documented override and shutdown protocols. Every agent is treated as a governed non-human identity - with scoped credentials, monitored activity logs, and defined escalation paths. Your teams retain full control over what the AI can do and when it requires human approval.

AI Producing Unreliable or Manipulated Outputs

Challenge: AI models can confidently generate incorrect information - known as hallucination - or be manipulated through prompt injection into ignoring their instructions and disclosing sensitive data. Prompt injection is the number one risk on the OWASP Top 10 for LLM Applications (2025). These risks are particularly acute for customer-facing deployments and any AI integrated with live business processes.

Solution: HSO implements Azure AI Content Safety to detect and block prompt injection attempts before they reach model inference. Groundedness detection is configured to ensure AI outputs are anchored in verified source materials rather than confabulated responses. For sensitive or customer-facing deployments, HSO conducts AI red teaming to stress-test models against real-world adversarial scenarios before go-live - and supports continuous testing as systems evolve.

AI Security Is an Executive Priority

According to Gartner, "the CFO must balance the risks and rewards of tools like generative AI" - ensuring that AI "creates value without introducing unacceptable risks." That responsibility doesn't sit with IT alone. It requires executive ownership, clear accountability, and a consulting partner who can turn that intent into a working security architecture.

HSO helps organizations build exactly that: a secure AI foundation grounded in Microsoft's enterprise AI tooling, aligned to international standards, and designed to scale alongside your AI ambitions.

Frequently Asked Questions

Common questions about securing AI systems within the enterprise.

Connect With Our AI Security Experts

Related Resources

Learn How We're Securing AI in the Enterprise